)

Last week I had the pleasure of going to Southwest Research Institute (SwRI) to attend a ROS Industrial training session. I’ve been insanely busy for the past few months writing computer vision and other code for a fairly substantial Robot Operating System (ROS) project. I’ve been converted over to the dark side of ROS from OpenCV as ROS’s message bus, modular nature, and build tools are absolutely phenomenal. Hopefully a lot of that code will go back to the community once my employer signs the contributors agreement. I’ve gotten to know a lot about the sensor side of ROS but I wanted to round out my knowledge of the actuator side of things. This ROS-Industrial session seemed like a good place to do just this, and also get acquainted with more people working in manufacturing.

SwRI has always had a mythical place in my mind, mainly because all the cool kids got to go there when I got left behind at the lab. When I was in undergrad the RHex robot went there for testing, and while I was at Cybernet our DARPA Urban Challenge crew got to go there while I got to stay home and man the fort. A few months ago SwRI reached out to me and I asked if I could perhaps help with ROS Industrial. I’ve been trying to get some code and documentation done for them but I’ve been so busy I haven’t made as much progress as I would have liked. SwRI is currently the maintainer of ROS Industrial, and along with the OSRF they are making great strides to improving the usability of ROS in industrial settings.

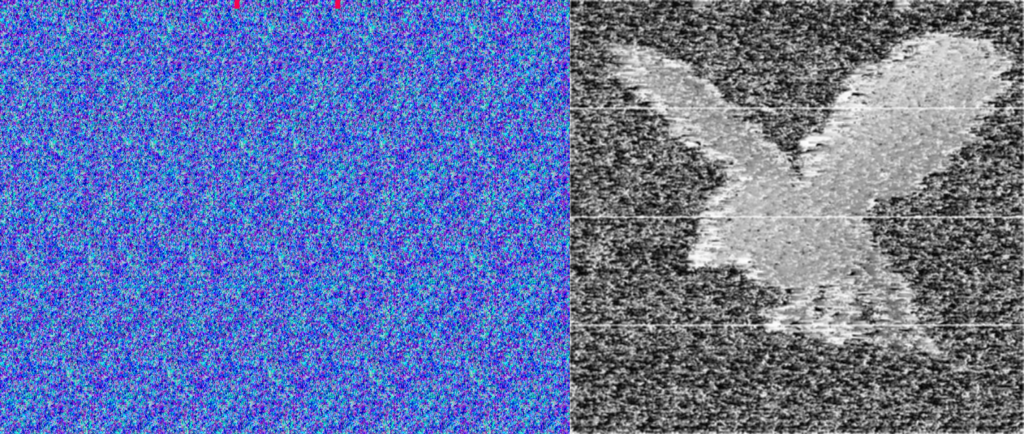

The tutorials published and presented by SWRI were excellent and very well polished. They made a conscience effort to have the tutorials go from high level tutorials for decision makers all the way to nuts and bolts code introductions for programmers and integrators. I really hope the publish more of the tutorials as they were exceptionally well put together, relevant, and well thought out. To be certain what SwRI is trying to accomplish with ROS industrial is no small feat, as you can see from the video of of their 2013 Automate Demo video at the top of the page. At the the ROS Industrial training session SwRI walked us through the high level architecture of this system (all of the components are FOSS software!) at a level where I think I could probably recreate it given a few weeks of coding. For a single day session I thought they covered a lot of ground and the demos they had of ROS industrial were incredible. In addition to the 2013 Automate demo they had a another robot doing arm doing an exceptionally complex deburring maneuver around an complex bent puzzle piece. Another demo showed an on-line object tracking and path planning demo for robot finishing of automotive parts. I capture a few of the demos in a short video.

In addition to the tutorials I picked up a few new and interesting libraries from the other attendees. One that stood out was MTConnect which is a free and open XML/HTML standard for robots and CNC software to communicate their state and status. It looks pretty cool and there are already some open libraries out on github. Another cool suite of tools is this EPICS PLC communication package put out by Paul Scherrer Institute in Switzerland. There also seems to be a mirror of it by Argonne National labs. Apparently CERN uses a lot of PLCs and they were insistent that all PLCs used at CERN had open Linux drivers. EPICS stands for Experimental Physics and Industrial Control System. I haven’t looked too deeply into the package but it seems like it could be handy.